Track AI Code all the way to production

An open-source Git extension for tracking AI code through the entire SDLC.

Tracking Code by

Just Install and Commit

Install the Git extension and then work as usual. Git AI uses Agent Hooks to track every line of AI-code that enters your codebase.

- Works with every coding agent

- AI Attribution survive rebases, merges, cherry-picks

- No workflow changes

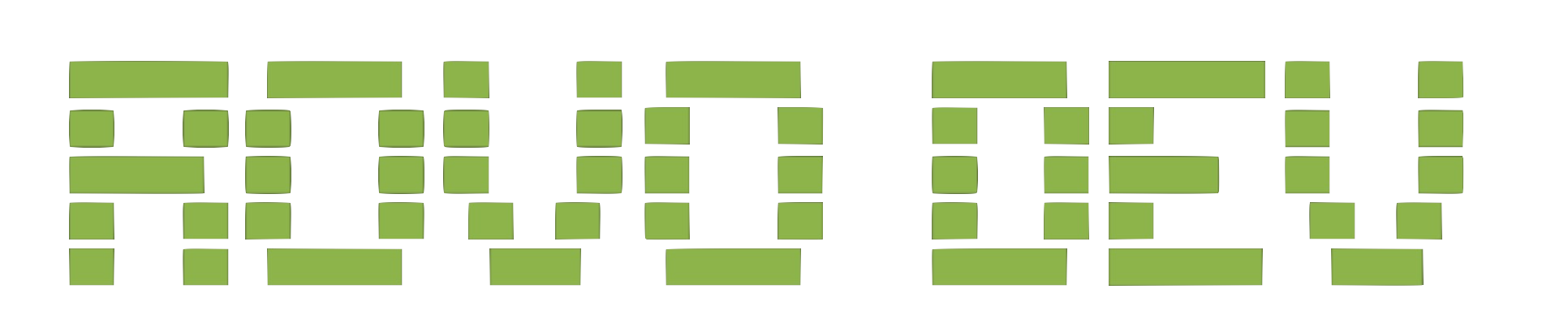

AI Blame

Git AI links each line of AI-code to the agent, model and prompt that generated it, helping engineers and their agents understand the "why" behind every line.

Read the DocsAsk the Author (Agent)

See AI code you don't understand? The /ask skill lets you talk to the Agent that wrote any code about how to use it, architecture decisions, and the intent of the engineer's original intent.

- Cross-agent — ask Cursor about code it wrote from Claude Code

- Answers include the original intent, not just what the code does

How it works

- Supported Agents mark the code they generated by calling `git-ai checkpoint`

- When you commit AI-attributions are saved in a Git Note

- Git AI preserves these attributions through rebases, squashes, ammends, merges,resets, cherry-picks, etc.

hooks/post_clone_hook.rs promptid1 6-8 promptid2 16,21,25 --- { "prompts": { "promptid1": { "agent_id": { "tool": "copilot", "model": "Codex 5.2" }, "human_author": "Alice Person", "summary": "Reported on GitHub #821: Git AI tries fetching authorship notes for interrupted (CTRL-C) clones. Fix: gaurd note fetching on successful clone.", ... }, "promptid2": { "agent_id": { "tool": "cursor", "model": "Sonnet 4.5" }, "human_author": "Jeff Coder", "summary": "Match the style of Git Clone's output to report success or failure of the notes fetch operation.", ... } } }

Git AI maintains the open standard for tracking AI authorship in Git Notes. Learn more on GitHub

Our Choices

No workflow changes — Just prompt and commit. Git AI tracks AI code accurately without cluttering your git history.

"Detecting" AI code is an anti-pattern — Git AI does not guess whether a hunk is AI-generated. Supported agents report exactly which lines they wrote, giving you the most accurate attribution possible.

Local-first — Works 100% offline, no login required.

Git native and open standard — Git AI uses an open standard for tracking AI-generated code with Git Notes.

Transcripts stay out of Git — Stored locally in a private SQLite or in your team's cloud or self-hosted prompt store — keeping your repos lean, free of sensitive information, and giving you control over your data.

Open Source

Install the Git AI extension. It's free, local-first, and open source.

- Accurate AI-Attribution on every commit

- AI Blame in your IDE

- Local storage for every Agent session

- Let Agents read past prompts while planning

- Measure % AI Code and AI-Accepted Rate of every commit

For Teams

CloudSelf-HostedUnified analytics and context storage. Accelerate your team's AI adoption and ensure all your new AI code that can be maintained and built upon.

- Track % AI Code across all your repos

- Track AI Code through the entire SDLC: Generated → Accepted → PR → Merged → Durability

- PR-level % AI Code, token cost, and AI efficacy

- Link intent, requirements, and architecture decisions to generated code

- Make your agents smarter by using past prompts as context

- Own your prompts — don't let agent vendors lock you in

- Automated insights into what's working

- Personalized tips for every engineer

- Compare AI effectiveness across teams, repositories, and task types